From GitHub Issue to Merged PR: Building an Autonomous Dev Pipeline with Claude Code

Table of Contents

We run 25+ repositories at [Stackbilt](https://stackbilt.dev?utm_source=blog&utm_medium=post&utm_campaign=dev-pipeline). One founder. Issues pile up. The boring stuff — doc fixes, test gaps, type errors — never gets prioritized because there's always something more urgent.

So we built a system where an AI agent picks up labeled GitHub issues, writes the fix, opens a PR, and posts a summary. No human in the loop until code review.

This post walks through the architecture, the governance model that keeps it safe, and the failure modes we've hit along the way.

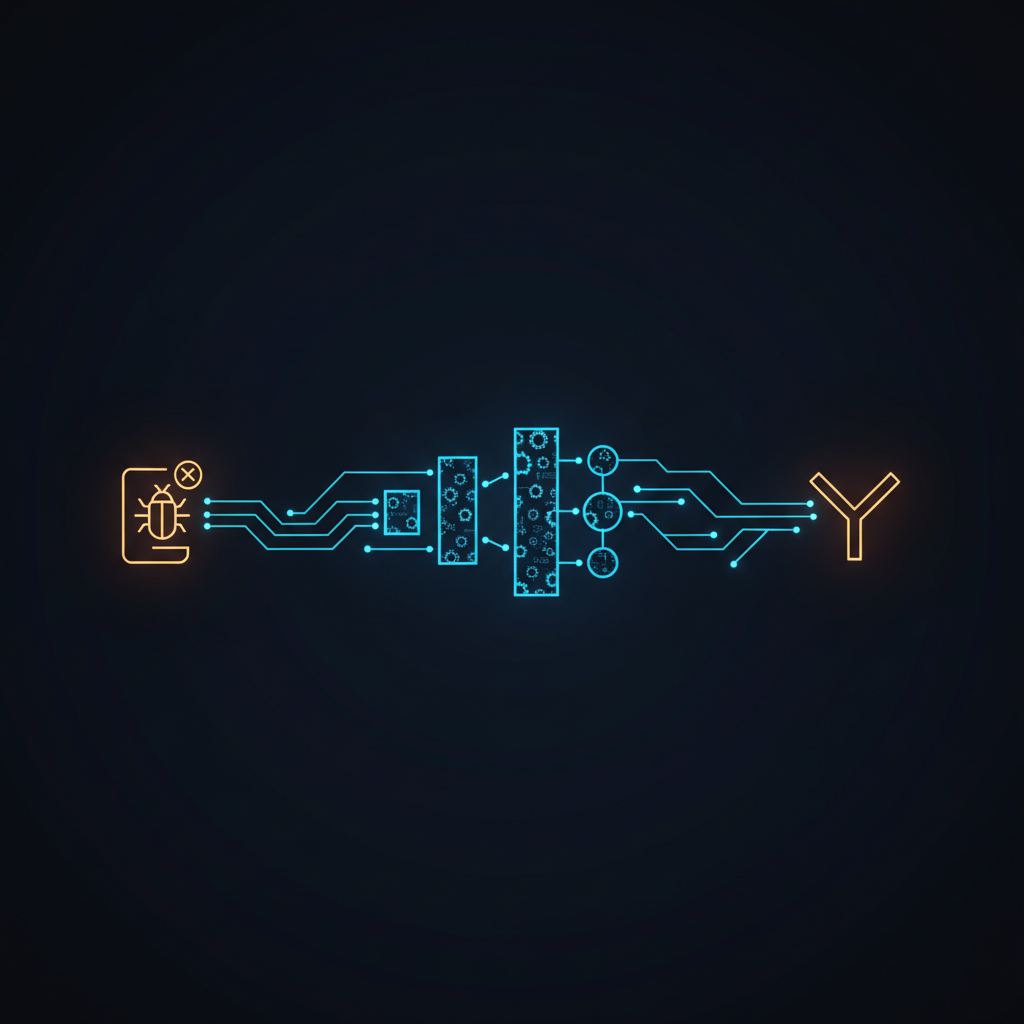

The pipeline

The flow is simple:

→ Issue Watcher (hourly cron)

→ Task Queue (D1)

→ cc-taskrunner (Claude Code session)

→ Auto-PR on auto/{category}/{task-id} branch

→ Session digest posted to AEGIS

The [cc-taskrunner](https://github.com/Stackbilt-dev/cc-taskrunner) is open source. It's a bash orchestrator that pulls tasks from a queue, spins up Claude Code sessions with structured prompts, and handles the lifecycle — start, monitor, capture output, report results.

Each task gets a full Claude Code session with the target repo checked out. The prompt includes the issue title, body, labels, and specific instructions: read the code first, follow existing patterns, run typecheck, commit with a reference to the issue number.

Governance tiers

Not every task should run unsupervised. We classify tasks into two authority levels:

**`auto_safe`** — executes immediately, no approval needed:

- Documentation fixes

- Test additions

- Research tasks

- Refactors

**`proposed`** — requires operator approval before execution:

- Bug fixes

- New features

The classification is deterministic — no LLM involved. GitHub issue labels map directly to categories:

enhancement → feature (proposed)

documentation → docs (auto_safe)

test → tests (auto_safe)

research → research (auto_safe)

refactor → refactor (auto_safe)

Label is the classifier. If an issue has both `bug` and `documentation`, the first match wins. No ambiguity, no hallucinated categories.

Safety hooks

The taskrunner runs with safety hooks that block dangerous operations:

- **No interactive prompts** — `AskUserQuestion` is blocked. If the agent needs clarification, it outputs `TASK_BLOCKED` with an explanation instead of hanging.

- **No destructive git ops** — force push, reset hard, checkout with file discard are all blocked.

- **No production deploys** — deploy scripts and `wrangler deploy` are blocked.

- **No secret access** — reading `.env`, `.dev.vars`, or credential files is blocked.

If a hook blocks an action, the task fails gracefully with a clear error. The operator reviews and either fixes the issue or restructures the task.

Auto-PR creation

When a task completes successfully, the taskrunner:

1. Creates a branch named `auto/{category}/{task-id}` (e.g., `auto/bugfix/a1b2c3d4`)

2. Pushes the commits

3. Opens a PR with a structured body: task ID, authority level, original prompt, result summary

4. Every PR includes "Generated by AEGIS task runner. Review before merging."

The operator reviews the PR like any other. Approve, request changes, or close. The agent tracks outcomes — which PRs get merged vs closed — and feeds that signal back into memory so future proposals get sharper over time.

The dreaming cycle

Beyond reacting to labeled issues, the system proactively proposes work. A nightly "dreaming cycle" reviews:

- Recent commits across all watched repos

- Error patterns in execution history

- Codebase gaps (missing tests, stale docs, type errors)

It generates task proposals with title, target repo, detailed prompt, category, and rationale. Proposed tasks go through the same governance gate — `auto_safe` categories execute immediately, everything else waits for approval.

There's a cap: max 3 proposals per dreaming cycle, minimum 80-character prompts (too-short prompts produce garbage), and a validated repo allowlist so the agent can't propose work on repos that don't exist.

What works well

The system excels at exactly the work humans deprioritize:

- **Documentation drift** — when code changes but docs don't, the agent catches it and opens a fix PR. These get merged at a high rate because the scope is tight and the risk is low.

- **Test coverage gaps** — "add tests for X" issues are well-scoped enough for autonomous execution. The agent reads the existing test patterns and follows them.

- **Type error cleanup** — TypeScript strict mode violations, missing type annotations, unused imports. Tedious for humans, trivial for agents.

What breaks

We track failure patterns systematically. The most common:

**`completion_signal_missing`** — the agent finishes work but doesn't output the expected `TASK_COMPLETE` signal. The taskrunner can't distinguish "done" from "stuck." This has repeated 11+ times in a week. Mitigation: the taskrunner now scans for git commits as a secondary completion signal.

**Large file timeouts** — files over 800 lines cause sessions to hit turn limits before finishing. The taskrunner now scans task prompts for file paths and auto-bumps `max_turns` for large files (800+ LOC → 40 turns, 1500+ LOC → 50 turns).

**Vague prompts** — "Improve the auth system" produces scattered, unfocused changes across too many files. The 80-character minimum helps, but the real fix is writing task prompts like you'd write a junior engineer's ticket: specific file, specific behavior, specific acceptance criteria.

**Phantom repos** — the dreaming cycle once proposed tasks targeting repos that don't exist on GitHub (a BizOps project name that didn't match any actual repo). Fixed by adding an alias map and a strict repo allowlist.

Try it yourself

The [cc-taskrunner](https://github.com/Stackbilt-dev/cc-taskrunner) is open source. It works with any repo and any task queue — you can adapt it to your own workflow.

The governance framework that powers the context management is [Charter](https://github.com/Stackbilt-dev/charter) (ADF) — also open source, Apache-2.0.

The [MCP Gateway](https://github.com/Stackbilt-dev/stackbilt-mcp-gateway) shows how we wire multiple products through a single OAuth-authenticated MCP endpoint.

See the full ecosystem at [github.com/Stackbilt-dev](https://github.com/Stackbilt-dev).

---

*This post describes infrastructure built at [Stackbilt](https://stackbilt.dev?utm_source=blog&utm_medium=post&utm_campaign=dev-pipeline) — a developer platform offering AI image generation and architecture scaffolding via MCP. The autonomous pipeline described here powers our internal development workflow across all Stackbilt products.*